Technical Clarity: Chandrasekar Srinivasan emphasizes the need for clear architectural direction in project delivery.

AI Transformation: AI shifts project management from coordination to orchestration, enhancing efficiency without eliminating human roles.

Dependency Handling: AI-assisted summarization improves visibility and decision-making by consolidating project status and dependencies.

Agentic Workflows: Exploring agentic workflows enhances risk detection and project tracking across multiple teams, improving collaboration.

Trust in AI: Establishing trust in AI outputs is crucial for adoption; teams must prioritize reliability and transparency.

Chandrasekar Srinivasan is a Principal Engineering Manager at Microsoft. With over 15 years of experience across distributed systems, cloud, and AI, he has driven both early-stage and hyper-scale platform initiatives.

We sat down with him to get an insider's look into how he’s rethinking project delivery in an AI-native environment. Here's what he told us.

Technical clarity and executional alignment

I'm Chandrasekar Srinivasan, and I’m a Principal Engineering Manager at Microsoft. I lead big teams building large-scale Tier-0 cloud platforms and have over 15 years of experience in distributed systems, cloud and AI. Throughout my career, I’ve led both 0→1 and 1→100 platform initiatives across control-plane and data-plane systems, focusing on reliability, scale, security, compliance, and cross-organizational execution.

In project delivery, I create technical clarity and executional alignment. That includes setting architectural direction, resolving ambiguity, unblocking teams, driving alignment across partner organizations, escalating risks early, supporting customer commitments, and building the team (hire/grow) to deliver successfully.

Why AI transforms project delivery from coordination to orchestration

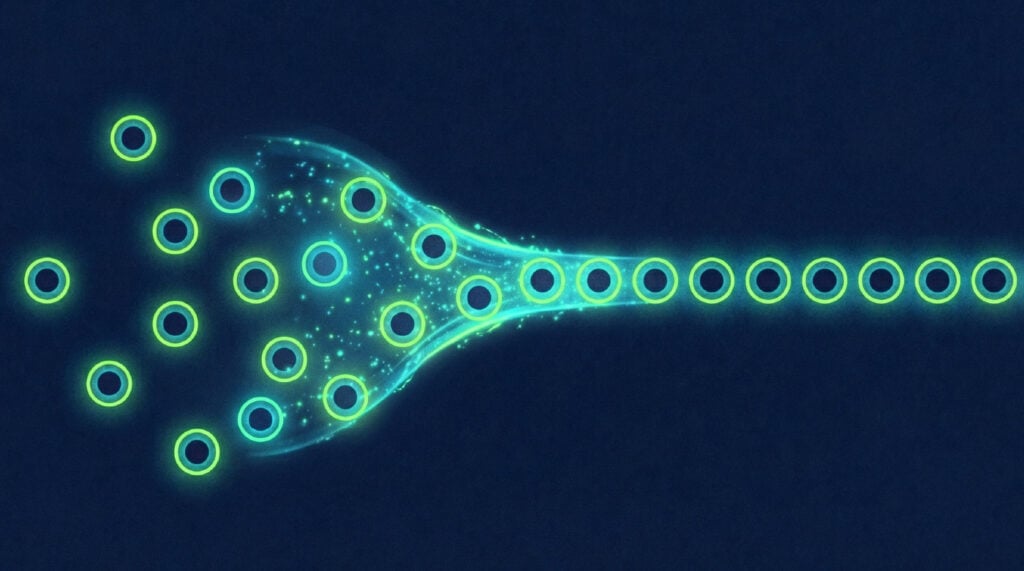

AI is becoming embedded in nearly every part of project delivery. It is not replacing leadership or engineering judgment, but it is significantly reducing the toil of execution. It is fundamentally shifting project management from coordination to orchestration.

AI is fundamentally shifting project management from coordination to orchestration.

My teams are using AI-assisted coding and code review to accelerate development, semantic chatbots to reduce support burden, and AI-based summarization to synthesize communication across meetings, threads, and documents. We are also using AI to speed up technical documentation and improve visibility through automated dashboards that highlight delays and execution risks.

As a result, I now spend less time on manual synthesis, repetitive documentation, and routine coordination. I spend more time on higher-value work: making architectural decisions, managing cross-team dependencies, handling ambiguity, improving prioritization, and helping teams focus on the most critical delivery risks.

How AI-driven approaches handle cross-team dependencies

In our environment, dependencies span dozens of teams, and updates distribute naturally across project systems, communication threads, and engineering workflows. Traditionally, stitching this together required significant manual effort, and we often identified risks later than we would have liked.

We introduced a lightweight V1 that brought together a focused set of signals from these systems — project tracking data, team updates, and communication artifacts — and used AI-assisted summarization to generate a consolidated view of project status and dependencies. We began to view this as a move toward a signal-first delivery model, where continuously evolving signals drive decisions rather than periodic status reports.

We are moving toward a signal-first delivery model, where continuously evolving signals drive decisions rather than periodic status reports.

This approach has already shifted the team from spending time collecting updates to acting on them. More importantly, we are now on a path to productizing this capability — integrating it more deeply with our delivery systems so it becomes a standard part of how we manage project visibility and execution tracking, rather than a parallel workflow. The key takeaway for us has been that the value is not in fully automating delivery, but in creating a system that continuously improves visibility and decision-making at scale.

How AI supports automation and where human judgment still prevails

The areas most ready for AI support are status synthesis, dependency tracking, risk identification, documentation, routine communication, design, coding, and code review. AI can help by pulling information across systems, summarizing what changed, identifying likely blockers, highlighting execution gaps, and suggesting follow-up actions.

That said, none of these areas are truly “fully automatable” in a meaningful delivery environment. Human judgment is still essential for prioritization, tradeoff decisions, organizational alignment, conflict resolution, architectural direction, and people leadership. The best use of AI today is not removing humans from the loop, but reducing operational drag so that engineers and leaders can focus on the highest-value decisions.

The best use of AI is not removing humans from the loop, but reducing operational drag so leaders can focus on the highest-value decisions.

Changes in our stack have moved us from static, manually maintained status artifacts to AI-assisted, query-driven workflows that surface insights faster and reduce manual overhead.

The tooling landscape changes surprisingly quickly. What seems advanced today can become table stakes very quickly. Our stack increasingly incorporates AI tools such as:

- GitHub Copilot — We use it for coding acceleration, code suggestions, project tracking, and improving developer productivity during implementation. Automated code review support has been especially valuable and, in many cases, still underrated. It helps engineers catch issues earlier, improve code quality, and move faster without requiring the same level of manual review effort for every routine change.

The biggest impact has been faster iteration speed and reduced review toil, while still allowing humans to focus on the deeper design and correctness questions. - Azure Copilot / AI assistants — We use them for technical documentation, summarization, knowledge retrieval, and support workflows.

- Query-based reporting tools — We use them to monitor project health, execution delays, and dependency status.

How agentic workflows improve project tracking and risk detection

We are exploring agentic workflows for project tracking, dependency management, and execution risk detection. These are high-value areas because they involve a large amount of fragmented information and manual follow-up, especially when coordinating across many teams.

Some of the use cases include automatically detecting project delays, identifying dependency gaps, and tracking whether all required teams have acknowledged actions or commitments. In large environments involving 100+ teams, this becomes extremely valuable.

How lightweight systems replace obsolete project dashboards

Most project dashboards today are already obsolete — they just haven’t been replaced yet. We are moving away from heavily GUI-driven, manually curated project management systems toward lighter-weight, query-driven workflows.

Most project dashboards today are already obsolete — they just haven’t been replaced yet.

The shift now treats project data as something teams can query and interact with dynamically — closer to a CLI or prompt-driven model. Instead of navigating multiple dashboards or maintaining static status documents, teams can ask targeted questions such as:

- “What are the top delayed dependencies this week?”

- “Which teams have not acknowledged critical actions?”

- “Where are we at risk of missing milestones?”

The combination of that predictable layer of structured project data and a probabilistic layer of generative AI enables this shift, interpreting those signals in context—connecting updates across systems, identifying patterns, and surfacing insights not obvious from any single source.

Why project management data must be decoupled from core project data as AI systems evolve

As systems evolve, we must also decouple project management data (like state, priority, etc.) from core project data, with clear guardrails for sensitive information. This ensures the predictable layer remains reliable and secure, while the probabilistic layer can safely operate on the right level of abstraction without exposing sensitive details. Decoupling project management data from core project data makes AI usable in enterprise environments.

As systems evolve, we must decouple project management data from core project data to make AI usable in enterprise environments.

As the quality of the probability distribution improves, this layer more effectively filters noise and reduces hallucinations, which is critical for building trust in these systems. The difference between noise and insight is the quality of the probabilities. In practice, this reduces the need for manual status curation and shifts effort toward decision-making. Teams spend less time updating GUIs and more time interacting with live data through queries or prompts.

The result is lower reporting overhead, faster visibility into execution risks, and a more dynamic way of managing delivery—where the system continuously reflects reality rather than relying on periodically updated snapshots.

How AI support allows junior engineers to take on more complex tasks sooner

With AI support, I can assign more complex tasks to junior engineers earlier in their careers. The combination of AI-assisted guidance and structured validation allows them to operate at a higher scope while still maintaining quality. This not only accelerates execution, but also meaningfully improves learning velocity and growth for the team. AI is compressing the experience curve — engineers can take on tomorrow’s scope today.

With AI support, junior engineers can take on more complex tasks earlier, compressing the experience curve.

Team alignment improves when AI can summarize discussions and highlight unresolved decisions. Validation becomes more efficient when AI assists with code review, documentation review, and traceability. That said, the fundamentals still matter. Scope still needs clear ownership. Alignment still requires real decision-making. Validation still needs engineering rigor.

Why adopting AI means establishing trust and balancing tension

Adopting AI sometimes means that there is tension between customization and standardization. Teams want workflows tailored to their needs, but at scale, leadership also needs consistency in measuring and acting upon delivery signals. Balancing those two has been one of my more interesting lessons.

Along with being able to balance tension, a team that wants to adopt AI must work on establishing trust. A lack of trust is the main barrier for adopting AI. Teams will use AI only if the outputs are accurate enough, explainable enough, and integrated into their workflow to save time instead of creating more verification work.

The long-term winners will be the teams that treat AI not just as a productivity tool, but as a system that must earn trust through reliability, transparency, and consistent value.

Want more insights like these? Sign up for a free DPM account to hear from more experts like these.